Current AI uses neural networks. The human brain is a neural network too. Artificial and biological neural networks are similar in the sense that both run lots of parallel computations. However, there are two big important differences between them.

- The human brain trains online whereas (at the time of this post, in 2026) consumer LLMs train offline.

- The human brain propagates axon potentials forward, whereas AI propagates high-fidelity signals both forward and backward.

Online vs Offline Training

When you use ChatGPT, the neural network doesn’t get any smarter. ChatGPT saves ephemeral state in the hidden representations within its transformer architecture. ChatGPT sometimes saves durable state into its context window. But the words you type onto ChatGPT don’t update the weights in ChatGPT’s neural network—at least not immediately. Your conversations may be used later when OpenAI does their next big offline training run. In this sense, big AIs like ChatGPT have their networks trained offline. Only the context and hidden representations are updated online. The network weights are updated offline.

The human brain is different. While sleeping can be considered a form of offline learning, the synaptic connections between the neurons in your brain are updated continuously in real time i.e. online.

Forward-Only Propagation

Signals flow through artificial neural networks forward and backwards. When you use ChatGPT, the signals flow forward. But when ChatGPT is trained, the signals flow first forward and then backward.

[TODO image of using ANN forward only]

[TODO image of training ANN forward and then backward]

ChatGPT’s neural network is a mathematical model trained via an algorithm called backpropagation. To train a neural network with backpropagation, you first supply a large set of input–target training data pairs. You feed the input data into the network to get output data. Then you compare the output data to the target to get an error. The error is propagated backwards through the network, and weights are updated in the direction that reduces error.

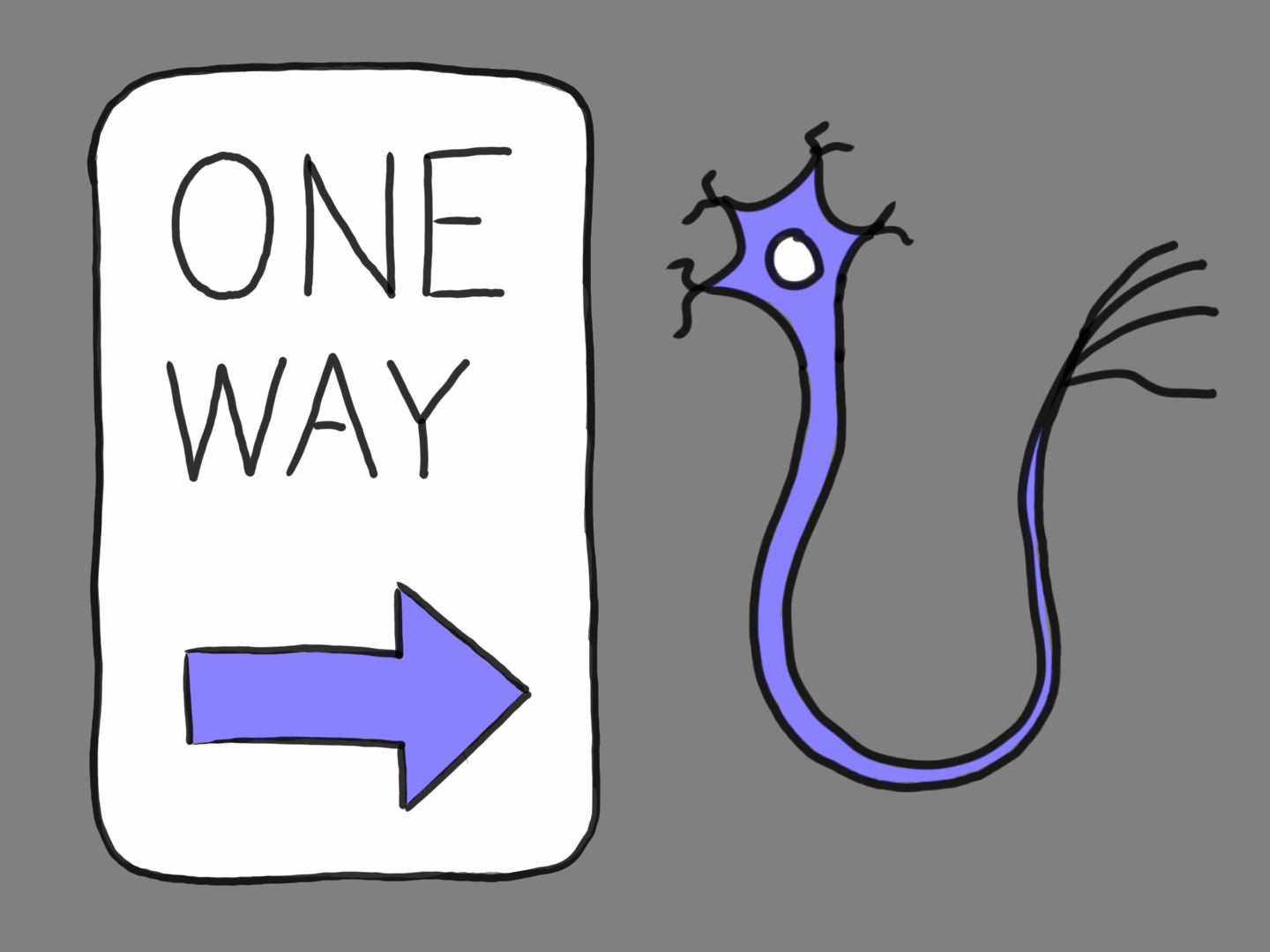

The human brain does not use backpropagation. How do we know? Because literal gradient propagation is biologically impossible. Here is a picture of a neuron.

[TODO image of a neuron]

On the left are input connectors called dendrites. On the right is an output connector called an axon. When the neuron is significantly activated, the axon transmits an action potential.

Notice that action potentials are unidirectional (though neural circuits are often recurrent). Activating dendrites triggers action potentials. The human brain cannot be operating on a foundation of backpropagation because backpropagation requires an error signal to go in both the forward and backward directions. But that’s not how axon potentials works. Axon potentials are one-directional signals.